- Karol Sobota

- Read in 6 min.

Regardless of the technology we use when developing an application, it’s a wise idea to test the created functions. In this article, we will take a look at how writing integration tests can ease the process of developing an application that is based on the Camunda process engine.

Example business process

For the purpose of this article, we present an example solution. It is an application for managing the process of recruiting new employees to the company. An example of the business process flow of this application, may look as follows:

As part of the above process, 3 main operations are performed, which are marked with blocks:

- Recruiter selection – Selection of a recruiting person

- Review – Recruiter conducts a review of the application

- Response sending – Sending responses back to the candidate

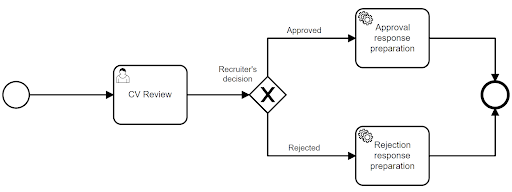

Since the application verification step can be a complex, it is a separate sub-process. Here is its diagram:

As we can see, the user performs the task at the very beginning. The recruiter can decide to accept or reject a candidate’s application. The decision he makes, affects which feedback message to the candidate will be prepared. Thus, which service task will be launched, it can be either: Prepare approval response or Prepare rejection response.

When implementing the above-described process, we would like to test all its elements and all the possible paths of the process from start to finish. Let’s see how this approach looks in practice.

Application implementation in Spring Boot

Using the Camunda Embedded engine solution, we created an example application. In one of our previous posts, we explain the difference between Camunda Embeded vs. Standalone.

Preparation of the application

We generated the project using the https://start.camunda.com/ tool. We have prepared files containing the definition of the process presented earlier. The definitions of these files look as follows:

recruitment_process.bpmn – link to the file

review_process.bpmn – link to the file

We then added sample implementations for each service task. For convenience, the implementations were simplified to log basic messages. The definitions for each service task, look as follows:

Recruiter selection

@Slf4j

@Service("recruiter_selection")

@RequiredArgsConstructor

public class RecruiterSelectionDelegate implements JavaDelegate {

@Override

public void execute(DelegateExecution delegateExecution) {

log.info("Selecting recruiter");

}

}

Approval response preparation

@Slf4j

@Service("approval_response_preparation")

public class ApprovalResponsePreparationDelegate implements JavaDelegate {

@Override

public void execute(DelegateExecution delegateExecution) {

log.info("Preparing response after approval");

}

}

Rejection response preparation

@Slf4j

@Service("rejection_response_preparation")

public class RejectionResponsePreparationDelegate implements JavaDelegate {

@Override

public void execute(DelegateExecution delegateExecution) {

log.info("Preparing response after rejection");

}

}

Response sending

@Slf4j

@Service("response_sending")

public class ResponseSendingDelegate implements JavaDelegate {

@Override

public void execute(DelegateExecution delegateExecution) {

log.info("Sending response");

}

}

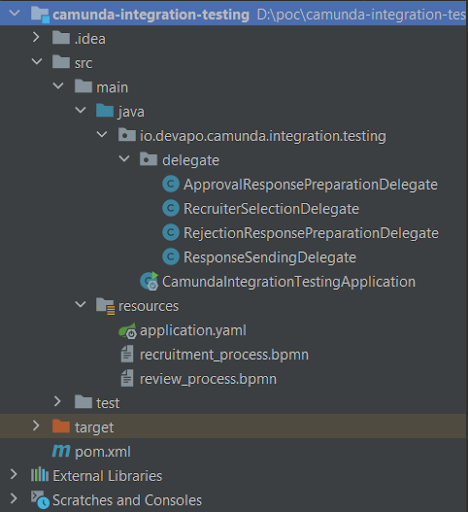

After adding the above definitions, the structure of our application, is as follows:

Adding tests

We prepared tests using the spock and testcontainers libraries. You can read more about them within one of the previous articles: Testcontainers: how to write reliable tests. For this purpose, we added dependencies to the project:

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.spockframework</groupId>

<artifactId>spock-core</artifactId>

<version>2.1-groovy-3.0</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.spockframework</groupId>

<artifactId>spock-spring</artifactId>

<version>2.1-groovy-3.0</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.testcontainers</groupId>

<artifactId>testcontainers</artifactId>

<version>1.17.1</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.testcontainers</groupId>

<artifactId>spock</artifactId>

<version>1.17.1</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.testcontainers</groupId>

<artifactId>postgresql</artifactId>

<version>1.17.1</version>

<scope>test</scope>

</dependency>

At this point we get to the point of the article, namely the first integration tests of our process. As mentioned earlier, as part of the testing, we would like to verify the correctness of all paths of the process up and down. To do this, we create a test class containing our first test, which would look like this:

@SpringBootTest(webEnvironment = RANDOM_PORT)

@ActiveProfiles("test")

class RecruitmentSpecIT extends Specification {

@Autowired

RuntimeService runtimeService

@Autowired

TaskService taskService

def "should approve application"() {

given:

startProcess()

when:

makeDecision("approved")

then:

noExceptionThrown()

}

private void startProcess() {

runtimeService.startProcessInstanceByKey("recruitment_process")

}

private void makeDecision(String decision) {

Task reviewTask = taskService.createTaskQuery()

.taskDefinitionKey("cv_review")

.singleResult()

taskService.complete(reviewTask.getId(), Map.of("decision", decision))

}

}

As we can see in the above example, the test first runs the main recruitment_process. Then the user task cv_review is completed with the passing of the decision variable with the value “approved”. But let’s look at what this means in practice. After running the above test, the following message should appear in the log to our eyes:

2022-05-09 23:19:27.302 INFO 23488 --- [ main] i.d.c.i.t.d.RecruiterSelectionDelegate : Selecting recruiter 2022-05-09 23:19:27.407 INFO 23488 --- [ main] .t.d.ApprovalResponsePreparationDelegate : Preparing response after approval 2022-05-09 23:19:27.423 INFO 23488 --- [ main] i.d.c.i.t.d.ResponseSendingDelegate : Sending response

The above information shows that three service tasks are running: RecruiterSelectionDelegate, ApprovalResponsePreparationDelegate and ResponseSendingDelegate. This means that our process has successfully passed through one of the two available paths.

Following the blow, let’s add a second test:

def "should reject application"() {

given:

startProcess()

when:

makeDecision("rejected")

then:

noExceptionThrown()

}

We run the above test again and this time in the log we see the information:

2022-05-09 23:43:49.447 INFO 24852 --- [ main] i.d.c.i.t.d.RecruiterSelectionDelegate : Selecting recruiter 2022-05-09 23:43:49.541 INFO 24852 --- [ main] t.d.RejectionResponsePreparationDelegate : Preparing response after rejection 2022-05-09 23:43:49.555 INFO 24852 --- [ main] i.d.c.i.t.d.ResponseSendingDelegate : Sending response

It is easy to see that the result of the next test is very similar, and only the service task called in the second step has changed. Due to the fact that this time, instead of ApprovalResponsePreparationDelegate, an entry for RejectionResponsePreparationDelegate appeared in the log, it means that we successfully passed the second available path in our process.

At this point, we could stop and conclude that we have achieved the intended goal, that is, we have tested our application’s process. The tests we have written, however, have two flaws – let’s verify them in practice.

Verification of multiple operations within the same test

To present the first fault, we modify the execute method in the RecruiterSelectionDelegate class as follows:

@Override

public void execute(DelegateExecution delegateExecution) {

log.info("Selecting recruiter");

throw new RuntimeException();

}

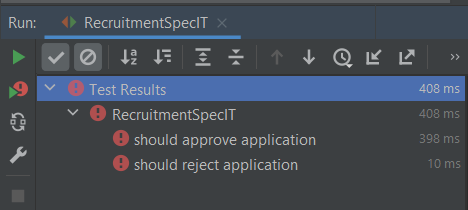

We then run the entire test class again, resulting in:

As we can see, although the intentional error was introduced only within one service task, it affected all available tests.

To prevent this, let’s correct our tests by replacing the call to the startProcess() method with a call to the startSubprocess method, the definition of which looks as follows:

private void startSubprocess() {

runtimeService.startProcessInstanceByKey("review_process")

}

After restarting the tests, they should turn green, but in the log this time the following information will appear:

“should approve application”

2022-05-10 00:31:39.018 INFO 1244 --- [ main] .t.d.ApprovalResponsePreparationDelegate : Preparing response after approval

“should reject application”

2022-05-10 00:31:39.074 INFO 1244 --- [ main] t.d.RejectionResponsePreparationDelegate : Preparing response after rejection

This means that this time we only ran the service tasks available within the review_process subprocess. When they were completed, the process ended. This is because by manually running the subprocess, it does not have a pointer to the parent process, so the main process will never be executed.

How then to verify the operation of service tasks from the main process?

In the case of the first one, Recruiter selection, it is enough to run the main process, as we did in the first testing approach. However, we must keep in mind that in order to verify only the first service task, we have to manually delete it after starting the process. Since we do not manually terminate the user task in the test, the process will remain in a waiting state, and thus may negatively affect the rest of the tests.

To put this task into practice, let’s modify the startProcess() method so that it returns the ID of the created process:

private String startProcess() {

return runtimeService.startProcessInstanceByKey("recruitment_process").id

}

We use this information to manually close the process after the test:

def "should successfully start process"() {

when:

String processId = startProcess()

then:

noExceptionThrown()

cleanup:

runtimeService.deleteProcessInstance(processId, "")

}

On the other hand, how to test the Response sending service task without going through the whole process? To run the process in a custom place, we need to add the following dependency to the project:

<dependency> <groupId>org.camunda.bpm.springboot</groupId> <artifactId>camunda-bpm-spring-boot-starter-test</artifactId> </dependency>

The above relationship provides a number of tool classes that improve the execution of operations on processes. One of them, is the BpmnAwareTests class, which allows you to run a process before the selected activity – the method that performs this operation in our case looks as follows:

private void startProcessBeforeActivity() {

BpmnAwareTests.runtimeService()

.createProcessInstanceByKey("recruitment_process")

.startBeforeActivity("response_sending")

.execute()

}

Using the above method, the test to check the Response sending task, then comes down to:

def "should send response to candidate"() {

when:

startProcessBeforeActivity()

then:

noExceptionThrown()

}

Verification of the same operations over multiple tests

Under the first approach, we noticed that for two different tests almost the same operations were performed. Considering only the case presented, we can conclude that performing two redundant service tasks is not a problem for us and the approach of running the process from start to finish each time is more reliable. However, we must keep in mind that as the application continues to evolve, there may be a need to add new features. This may lead to a situation where to test two almost identical paths, we will have to perform dozens of tasks that do not affect the verified cases in any way.

Summary

Within the framework of the presented article, we have discussed ways of writing process tests in an application based on the Camunda process engine. After analyzing solutions and their disadvantages, it can be noted that by deciding on only one approach to writing process tests, we are faced with a choice. We can have redundant testing of some tasks and inaccurate verification of the operation of the entire process.

In view of the above, in our opinion, the optimal solution is to use two approaches simultaneously. When preparing tests, we must select them so that some of them verify the entire process from start to finish, and in the case of complex subprocesses, we can test them independently.

We hope that the considerations presented will facilitate the selection of the right approach to writing process tests, and, consequently, faster development of target applications.

Check out our smart process automation services

Let us help you unlock your business operations efficiency